How to Analyze Onboarding Friction in B2B SaaS

Onboarding friction is not just a drop-off metric. In B2B SaaS, the team has to see where the path to first value slows down, decide whether the friction is useful or avoidable, and collect enough evidence to choose the next fix without guessing.

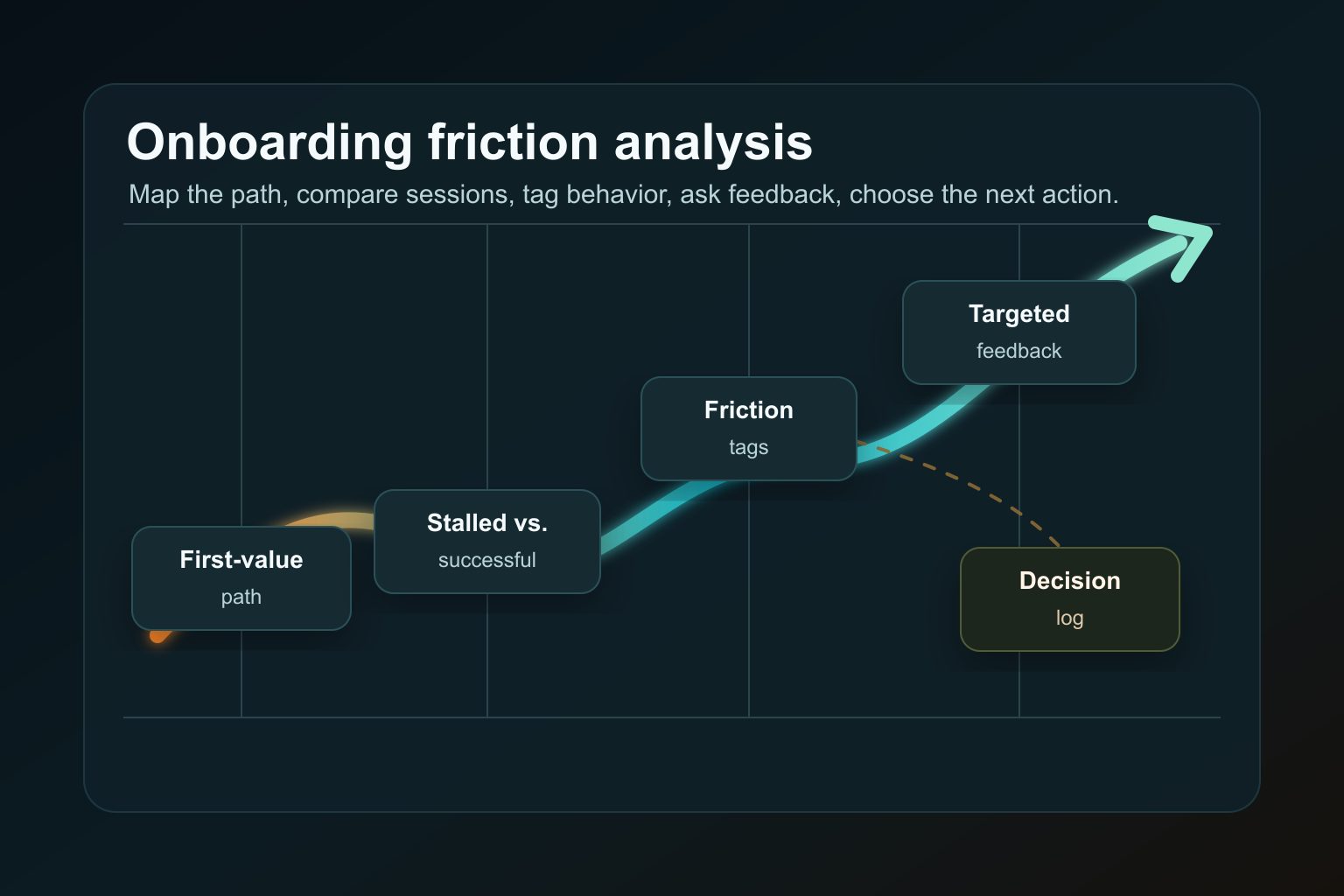

A practical onboarding friction analysis starts with the first-value path. Map the steps a new account must complete, compare stalled and successful sessions from the same segment, tag observable friction, ask a targeted question when behavior is ambiguous, and write one next action into a decision log.

This is stronger than reviewing a generic activation funnel. A funnel can show that users stopped after setup, but it cannot explain whether they were blocked by permissions, confused by an empty state, waiting for a teammate, or simply reaching a necessary commitment step. The analysis has to keep behavior, feedback, and product context close together.

Last reviewed: April 29, 2026. This guide treats behavior evidence as directional. Session replay, funnel data, and survey answers can show useful patterns, but they do not prove exact user motivation by themselves.

What onboarding friction means in B2B SaaS

Onboarding friction is anything that slows a new user before they understand, configure, or experience the product’s value. Some friction is avoidable confusion. Some friction is necessary commitment. The analysis gets weak when teams treat both as the same thing.

Useful friction can help the user choose a relevant path, confirm an integration, invite the right teammate, or make a security-sensitive decision deliberately. Avoidable friction makes the user work harder without creating confidence, context, or progress.

For B2B SaaS, friction often falls into five groups:

| Friction type | What it looks like | What to inspect |

|---|---|---|

| Avoidable confusion | The user loops, pauses, retries, or exits without reaching the next step | Copy clarity, empty states, visible next action, setup guidance |

| Necessary commitment | The product asks for a role, workspace, integration, payment method, or permission before value | Whether the commitment is explained and timed correctly |

| Hidden setup work | The user discovers imports, permissions, data mapping, or configuration after signup | Whether the path sets expectations before the work begins |

| Role or permission mismatch | An evaluator lands in an admin task, or a champion needs a technical teammate | Role routing, invitation path, handoff language |

| Missing first-value clarity | The product technically works, but the user cannot tell what outcome they should see first | Activation milestone, success state, proof of progress |

The goal is not to remove every step. The goal is to make each step either useful, clearly explained, or easier to complete.

When to analyze friction before redesigning onboarding

Analyze onboarding friction before a redesign when the team can name a stalled path but cannot yet explain the cause.

Good triggers include:

- trial users create an account but never complete setup;

- users repeatedly visit docs, settings, or integrations during the first session;

- activation looks weak in one role, source, device, or use case;

- the team sees rage clicks, dead clicks, form errors, or repeated retries near the same step;

- support questions cluster around setup, permissions, imports, or first value;

- a new onboarding flow improved completion for one segment and hurt another.

Do not start with broad questions like “why is activation down?” or “what should our onboarding look like?” Start with a narrower decision: which step is blocking which segment from reaching which first-value event?

If the question is still too broad, use Monolytics Research to look for repeated failed-session patterns. If the team already knows the path, use Monolytics Records to review exact sessions around that route or event.

The 6-step onboarding friction analysis workflow

1. Map the first-value path

Write the path from signup to first value as concrete steps, not as a single “onboarding” phase.

For example:

- Account created.

- Workspace named.

- Role or use case selected.

- Integration, import, snippet, or data source connected.

- First workflow configured.

- First meaningful result shown.

- User returns or invites a teammate.

The first-value event should be something the user can recognize, not only an internal activation metric. “Configured first dashboard and saw live data” is clearer than “activated.” “Created first campaign and previewed the result” is clearer than “completed onboarding.”

If the setup depends on another person, split that dependency into the path. A champion who needs an admin invite is not failing onboarding in the same way as an admin who sees an error during setup.

2. Define stalled and successful cohorts

A session is easier to interpret when it has a comparison group.

Define the stalled cohort by the step where progress stops. Define the successful cohort by users from the same segment who reached the first-value step. Keep source, role, device, plan, account state, and use case as similar as possible.

Examples:

| Question | Stalled cohort | Successful comparison |

|---|---|---|

| Users start setup but do not connect data | New accounts that reach the integration step and exit | New accounts from the same source that connect data in the first session |

| Evaluators browse onboarding but do not activate | Users who select evaluator role and never complete first workflow | Evaluators who complete the workflow or invite an admin |

| Mobile users abandon setup | Mobile sessions that enter setup and stop before first value | Desktop and mobile sessions from the same acquisition path that complete setup |

This comparison keeps the team from overreading normal behavior. Successful users may also pause, open docs, or check settings. The question is which behavior appears repeatedly in stalled sessions and not in comparable successful ones.

3. Review behavior around the exact stuck step

Do not watch the whole replay library. Start near the step where the user loses momentum.

Look for observable behavior:

- loops between setup, docs, settings, and help;

- repeated visits to the same form or permission screen;

- dead clicks or clicks on non-interactive UI;

- rage clicks, retries, or validation errors;

- long pauses before commitment steps;

- exits after empty states or low-context success states;

- side paths to pricing, security, integrations, or docs.

For a repeatable review protocol, use the broader session replay analysis workflow. If the team needs to document the finding for prioritization, use the session replay evidence review template.

4. Tag the friction without guessing motivation

Use friction tags that describe behavior, not mind reading.

Write “loops between setup and docs” instead of “does not understand setup.” Write “opens security page before inviting teammate” instead of “does not trust us.” Write “exits after empty state” instead of “not motivated.”

Useful tags include:

| Tag | Observable signal | Safer interpretation |

|---|---|---|

| Hesitation | Long pause before a meaningful action | The next step may need more context |

| Looping | Repeated movement between pages without progress | The path may be missing information or sequencing |

| Dead click | Click on an element that does not respond | The interface may imply interactivity where none exists |

| Error recovery | Error followed by retries, field edits, or exit | The error may not be actionable enough |

| Trust check | Opens security, pricing, privacy, docs, or proof before continuing | The user may need proof near the decision point |

| Role mismatch | Opens invite, permissions, or team settings instead of continuing | The current user may not be the right actor |

| Quiet exit | Leaves after an empty state, unclear success state, or unexplained wait | The path may not show progress or payoff clearly |

The tag is a clue, not a conclusion. A pattern becomes stronger when it repeats across comparable sessions and lines up with feedback, support context, or funnel data.

5. Ask targeted feedback when behavior is ambiguous

Behavior often shows that friction exists without explaining the reason. That is the moment to ask a short contextual question.

Use a targeted prompt when the session shows:

- hesitation before a setup or permission step;

- repeated retries without an obvious technical error;

- exits after seeing a value proposition or empty state;

- side paths into docs, security, pricing, or integrations;

- role confusion that is visible but not fully explainable.

Keep the question specific:

| Behavior seen | Targeted question |

|---|---|

| User exits at integration setup | “What stopped you from connecting this step today?” |

| User loops between docs and setup | “What information were you looking for before continuing?” |

| User pauses before inviting a teammate | “What would make inviting a teammate feel safe or useful right now?” |

| User exits after an empty state | “What did you expect to see after setup?” |

For survey mechanics, see how to collect targeted user feedback with Monolytics Surveys. For activation-specific survey work, use how to validate activation issues with in-app surveys.

6. Choose the next action

The output of onboarding friction analysis should be a decision, not a long notes document.

Choose one next action:

| Evidence state | Next action |

|---|---|

| Repeated behavior, clear fix, low product risk | Fix copy, ordering, UI guidance, or error handling |

| Repeated behavior, unclear reason | Ask a targeted question or run a short interview |

| Behavior visible, event tracking weak | Instrument the missing step before changing the flow |

| Conflicting signals between stalled and successful sessions | Review a narrower segment or compare another cohort |

| Friction appears useful but poorly explained | Keep the step and improve context, proof, or expectation-setting |

| Pattern is rare or outside the target segment | Monitor or postpone |

When the next action is a product experiment, connect the finding to a testable hypothesis. For a broader conversion loop, use how to turn feedback into conversion experiments.

Onboarding Friction Decision Kit

Use this kit when the team needs a lightweight artifact for a product, growth, or UX review. It keeps the analysis focused on the first-value path, the stalled segment, the evidence, and the next decision.

First-value path audit

| Step | User-visible goal | Required dependency | Evidence to check | Risk |

|---|---|---|---|---|

| Signup complete | User can enter the product | Account and email state | Signup completion, form errors, source/device split | User reaches product with unclear next step |

| Role or use case selected | Product can route the path | User knows their role or job | Role selection behavior, skipped fields, backtracking | Wrong onboarding path |

| Setup started | User knows what to configure | Permission, integration, import, teammate, or data source | Setup-entry rate, pauses, docs visits | Hidden work appears too late |

| First workflow configured | User can do one meaningful action | Required product state is ready | Session replay, errors, retries, support notes | Setup effort blocks value |

| First value shown | User can recognize progress | Product shows a result, insight, preview, or next action | First-value event, return rate, targeted feedback | User cannot tell whether setup worked |

Stalled-session triage matrix

| Session type | What it means | Action |

|---|---|---|

| Watch now | Session matches the segment, reached the stuck step, and failed to reach first value | Review next and tag behavior |

| Compare with success | Session belongs to the same segment and reached first value | Review enough examples to separate normal pauses from risky friction |

| Ask feedback | Behavior shows hesitation but reason is unclear | Trigger or send a short contextual question |

| Instrument first | Session reaches a step the product does not currently measure well | Add the missing event, state, or property before deciding |

| Park | Session is outside the segment or lacks enough context | Do not use it to drive the current decision |

Onboarding friction taxonomy

| Pattern | Common evidence | Possible response |

|---|---|---|

| Unclear value | User reaches setup but does not understand the payoff | Show the first-value outcome earlier or preview the result |

| Hidden setup work | User discovers integrations, imports, or permissions after signup | Set expectations before signup or before setup starts |

| Role mismatch | User needs another teammate or permission level | Route by role, add invite path, or explain the handoff |

| Permission or security blocker | User opens security, privacy, docs, or admin settings before continuing | Put proof and permission guidance near the decision |

| Dead-end empty state | User sees a blank state and exits | Add example data, next action, or progress explanation |

| Error recovery | User retries fields or setup after validation errors | Make errors actionable and preserve progress |

| Quiet exit | User leaves without obvious failure | Add a targeted question or compare successful sessions before guessing |

Decision log row

Copy this row into the issue, experiment brief, or research note after the review.

| Decision risk | Segment | Evidence reviewed | Repeated pattern | Confidence | Next action | Follow-up signal |

|---|---|---|---|---|---|---|

| Example: new admins reach integration setup but do not connect data | Self-serve B2B trial admins on desktop | 10 stalled sessions, 5 successful sessions, 1 targeted prompt | Stalled sessions loop between setup and docs before exiting at permissions | Medium: repeated behavior plus feedback support | Add permission expectation copy, admin invite option, and one setup prompt | More users connect data or invite the right teammate from the same segment |

Keep the row plain. A useful decision log helps the team act and later check whether the change moved the follow-up signal.

Common onboarding friction patterns

Unclear first value

The user completes account creation but cannot tell what they are supposed to achieve next. This often creates short sessions, repeated navigation, and exits after a generic dashboard.

Improve this by naming the first outcome, showing progress, and making the next action obvious. For SaaS onboarding replay examples, use session replay for SaaS onboarding teams.

Setup before payoff

Some setup is unavoidable. The risk is asking for effort before the user understands why it matters.

If users pause at imports, permissions, snippets, or integrations, inspect whether the payoff is visible before the commitment. If the same issue starts during signup, pair this analysis with why users abandon signup forms before submit and the signup friction diagnostic checklist.

Wrong role, wrong task

B2B onboarding often fails when the first user is not the right actor for the next step. An evaluator may need product proof. A champion may need an admin invite. A technical admin may need integration details. Treat those as separate paths.

Permission or security blocker

Security, privacy, billing, and permissions are not always objections. Sometimes they are necessary confidence checks. The fix may be clearer proof and expectation-setting rather than removing the step.

Quiet exit after an empty state

A quiet exit can be easy to miss because there is no error. The user simply leaves after seeing a blank dashboard, incomplete setup state, or vague success message. Review successful sessions from the same segment before deciding whether the empty state itself is the blocker.

Trial friction after onboarding

If users reach first value but still do not continue toward paid conversion, the problem may have moved from onboarding to trial evaluation. Use trial-to-paid drop-off signals product teams should watch as the next diagnostic layer.

Where Monolytics fits

Monolytics is useful when the team needs to move from “users are dropping off” to “this segment repeatedly stalls at this step, and here is the next action.”

Use Monolytics Records when the path is known and the team needs exact sessions around a page, event, source, or failed outcome. Use Monolytics Research when the team needs repeated failed-session patterns across a broader cohort.

Use targeted surveys when behavior shows friction but not the user’s reason. For small teams that need a weekly operating loop around activation, use user feedback workflows for early-stage startups.

For product context, see how teams use Monolytics to see UX issues and conversion blockers and review Monolytics pricing when the workflow is part of a product evaluation.

FAQ

What is onboarding friction?

Onboarding friction is any step that slows a new user before they reach, configure, or recognize product value. It can be avoidable confusion, necessary commitment, hidden setup work, role mismatch, or unclear first-value guidance.

Is all onboarding friction bad?

No. Some friction helps users make a deliberate decision, choose the right path, invite the right teammate, or complete a sensitive setup step. The problem is friction that adds effort without creating confidence, context, or progress.

How do you identify onboarding friction?

Map the first-value path, define stalled and successful cohorts, review behavior around the stuck step, tag observable friction, and ask targeted feedback when behavior does not explain the reason clearly.

How do you analyze onboarding drop-off?

Start with the exact step where users stop progressing. Then compare stalled sessions with successful sessions from the same segment. Use funnel data to locate the drop-off, session evidence to inspect what happened, and feedback to clarify ambiguous behavior.

What evidence should product teams review before changing onboarding?

Review completion data for each first-value step, failed and successful session examples, friction tags, support or sales context, and targeted feedback when the reason is unclear. Avoid changing onboarding because of one vivid replay or one survey answer.

How do surveys fit into onboarding friction analysis?

Surveys are useful when behavior shows hesitation, looping, retries, or exit but does not explain the reason. Keep the question short, contextual, and tied to the step the user is on.

Can session replay prove why users abandoned onboarding?

No. Session replay can show observable behavior and context, but it cannot prove exact motivation by itself. Use replay to find patterns, then support the decision with comparison sessions, metrics, feedback, or follow-up research.

Related Monolytics workflows

Use the signup abandonment diagnostic as the parent diagnostic when signup, setup, or first-value behavior needs a broader frame.

Pair this page with the activation survey validation and session replay for SaaS onboarding when the same cohort shows hesitation before activation.

When the pattern points to broken behavior, missing feedback, or setup uncertainty, move the evidence into See every bug and keep implementation context grounded in the event tracking setup guide.

Final takeaway

Onboarding friction analysis should produce one clear decision: keep the step and explain it better, remove avoidable confusion, ask a targeted question, fix missing instrumentation, test a small change, or postpone the issue.

The strongest teams do not redesign onboarding from a blended drop-off chart. They map the first-value path, compare stalled and successful journeys, describe behavior carefully, and choose the next action with enough evidence to learn from the result.