User Feedback Workflows for Early-Stage Startups

User feedback workflows for startups work best when they stay small, repeatable, and close to a real product decision. Early-stage teams do not need a heavyweight research program before they learn from users. They need a weekly loop that connects what users did, what they said, who should be contacted next, and what the team will change.

The risky version of user feedback is collecting more input than the team can interpret: survey answers, support notes, founder calls, sales objections, analytics events, session replays, feature requests, and roadmap votes. That pile can feel useful, but it often turns into scattered opinions.

The stronger version starts with one decision risk. For example: new users are not finishing onboarding, trial users are not reaching the first value moment, people open a feature but do not use it, or signup visitors stop before completing the form. Then the team reviews behavior, asks one contextual question, talks to the right users, and ships one small change with a follow-up signal.

Last reviewed: April 29, 2026. This guide focuses on early-stage SaaS and product-led teams. It does not treat feedback as proof of product-market fit, and it does not replace user interviews, support conversations, product analytics, feedback boards, or structured research.

Why early-stage feedback gets noisy

Early-stage teams are close to users, which is useful. Founders talk to customers, support sees repeated confusion, product leads hear feature requests, and growth teams see where activation stalls. The problem is that those signals arrive in different formats and with different levels of reliability.

A request in a feedback board may show demand, but it may not show whether the request blocks activation. A support ticket may show frustration, but it may be from an edge case. A session replay may show hesitation, but it may not reveal the user’s reason. A survey answer may explain a moment, but it may not represent the whole segment.

That is why a startup feedback system should not begin with the tool. It should begin with the decision the team needs to make.

Use a lightweight workflow when the team is asking questions like:

- Why do new users stop during onboarding?

- Why do trial users sign up but never reach the first useful action?

- Why do users open a feature and then leave without trying it?

- Which repeated support question points to a product or messaging fix?

- Which pricing or signup uncertainty should we address first?

- Which feedback request is evidence of a real blocker, and which one is a preference?

Those are not abstract research questions. They are operating questions. The answer should become a product change, onboarding change, message change, support improvement, or follow-up conversation.

What a useful startup feedback workflow needs to decide

A good workflow narrows feedback to one decision risk. That risk should be specific enough that the team can inspect behavior and ask the right question.

Onboarding confusion

Onboarding confusion happens when users start setup but do not understand what the current step does, why it matters, or what should happen next. Behavior can show repeated setup visits, backtracking, idle time, exits before first value, or users returning to help content.

For deeper onboarding diagnosis, pair this workflow with how to analyze onboarding drop-off in B2B SaaS.

Weak activation

Weak activation happens when users create an account but never reach the action that proves they experienced value. The team may see signups in analytics, but the product still feels quiet because users do not import data, create a project, invite a teammate, finish setup, or use the core feature.

Use activation surveys when event data shows drop-off but the reason is unclear.

Unclear feature value

Sometimes users open a feature, scan it, and leave. That behavior does not automatically mean the feature is unwanted. It may mean the empty state is vague, the benefit is unclear, the first action is hidden, the user lacks data, or the feature appears too advanced for the current moment.

Feature adoption micro-surveys are useful when the question is not “do users want this?” but “what is missing before this user would try it?”

Repeated support questions

Support-heavy feedback loops are common in early-stage products. A repeated question can point to missing help content, unclear UI copy, a broken expectation, or a product workflow that asks users to guess.

Do not treat every repeated support question as a feature request. Match it to behavior. If users repeatedly visit the same help article and then abandon the same task, the fix may be inline guidance rather than a new feature.

Signup or pricing hesitation

Early-stage teams often hear feedback after signup, but hesitation can happen earlier. Visitors may reach pricing, inspect plan details, start signup, and stop. That is useful feedback even before a survey answer exists.

If signup is the issue, use the signup abandonment diagnostic. If the issue starts on pricing, use pricing-page evaluation friction.

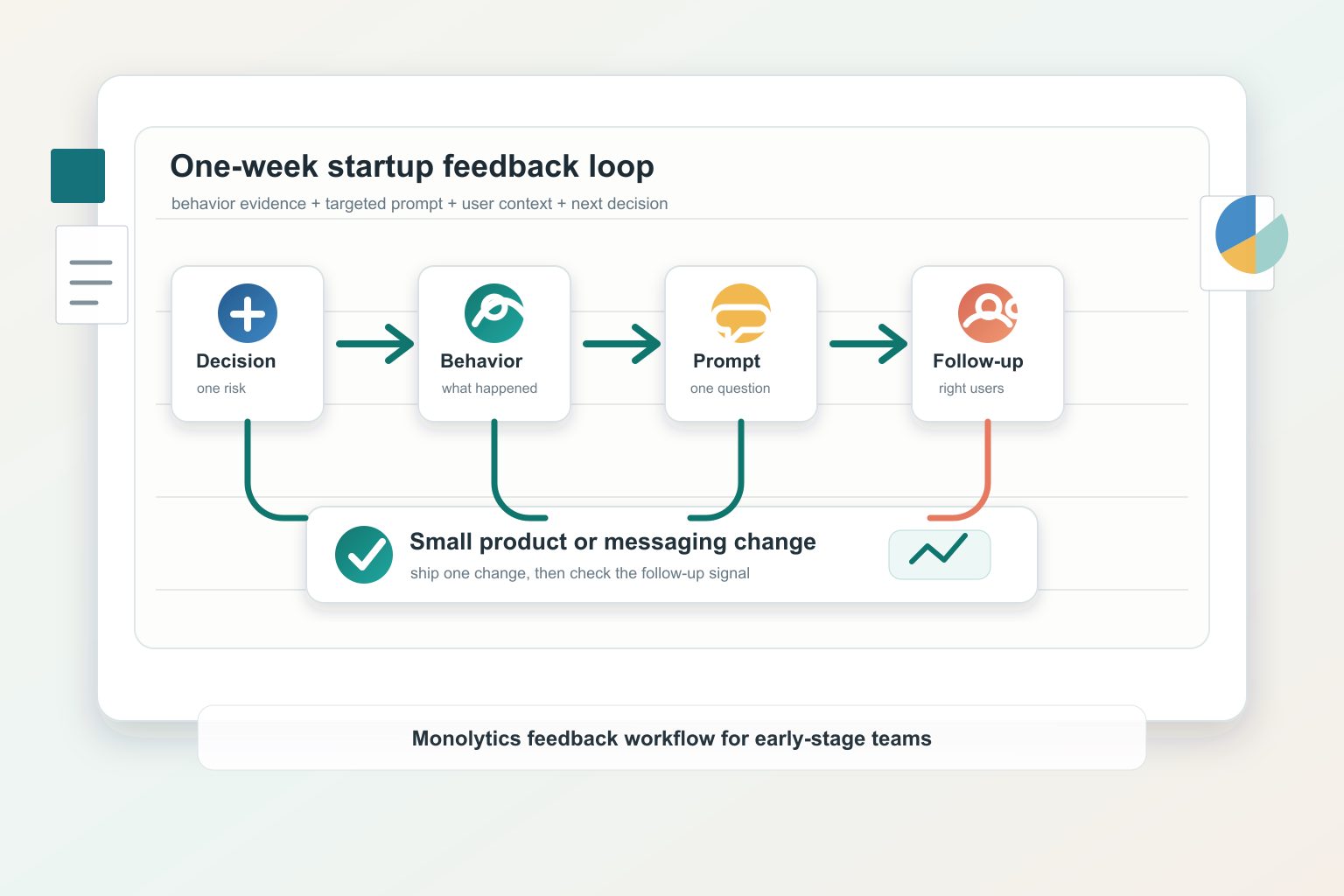

The one-week feedback loop

This loop is intentionally small. It is designed for a founder, product lead, growth operator, or small team that can run it every week without turning feedback into a separate research department.

1. Pick one decision risk

Write the risk in one sentence:

- “New trial users do not finish setup.”

- “Users open the import feature but do not complete an import.”

- “Pricing visitors start signup and stop before account creation.”

- “Support keeps answering the same onboarding question.”

Avoid broad goals like “understand users better.” Broad goals create broad feedback. The weekly loop needs one product decision.

2. Review behavior evidence

Look at sessions, paths, events, and repeated patterns around the decision risk. Behavior evidence helps you see what happened before asking why.

Use Monolytics Research when you need repeated patterns across failed sessions. Use targeted recording when the route is already known, such as a campaign, onboarding step, signup path, or failed outcome.

Do not overread one replay. One session can create a hypothesis. Repeated behavior across comparable sessions makes the hypothesis stronger.

3. Ask one contextual question

When behavior shows hesitation but not the reason, ask one short question at the friction point.

Examples:

- “What made this step unclear?”

- “What were you trying to accomplish today?”

- “What is missing before you would try this?”

- “What answer were you hoping to find?”

- “What made the next step feel uncertain?”

This is where targeted user feedback is stronger than a generic survey. The question is tied to a behavior, page, event, or moment.

4. Talk to the right users

Survey answers are useful, but some questions need a conversation. Follow up with users who match the behavior pattern, not only users who are loudest in a feedback channel.

Good follow-up candidates include:

- users who hit the same onboarding blocker twice;

- users who answered a contextual prompt with a specific obstacle;

- users who reached a feature but never took the first meaningful action;

- users who abandoned signup after showing high intent;

- support contacts whose issue appears in multiple sessions.

The goal is not to interview everyone. It is to understand the language, context, and motivation behind a repeated pattern.

5. Ship or adjust one small change

The weekly loop should end with a decision. That decision may be a product change, an onboarding message, a help article, a survey prompt, a follow-up email, or a deeper research pass.

Keep the change small enough to learn from. A complete onboarding redesign is hard to attribute. A clearer setup step, better empty state, changed signup expectation, or improved inline explanation is easier to evaluate.

6. Check the follow-up signal

The loop is not closed when the team ships. It closes when the team checks whether the same risk changed.

Possible follow-up signals:

- fewer exits from the setup step;

- more users reaching the activation event;

- fewer repeated help visits for the same task;

- more feature attempts after the empty-state change;

- fewer signup stops at the same field;

- fewer support questions about the same expectation.

Treat this as directional evidence unless the sample and method support a stronger conclusion.

One-Week Startup Feedback Loop Worksheet

Use this worksheet in a weekly product meeting. Fill one row at a time. If the row is vague, the workflow is probably too broad.

| Decision risk | User segment | Behavior trigger | Feedback prompt | Follow-up conversation | Product action | Follow-up signal |

|---|---|---|---|---|---|---|

| Onboarding confusion | New trial users in the first session | Repeated setup step, backtracking, or exit before first value moment | “What made this step unclear?” | Ask 2-3 users to explain what they expected the setup to do | Rewrite setup copy or split the step | Fewer exits or repeated step visits from the same segment |

| Weak activation | Users who sign up but do not reach the first useful action | No feature engagement after signup or short first session | “What were you trying to accomplish today?” | Ask what outcome they expected before signup | Change first-run path or empty-state prompt | More users reach the activation event being reviewed |

| Unclear feature value | Users who open a feature but do not use it | Feature page viewed, no meaningful action | “What is missing before you would try this?” | Ask how they currently solve the job | Clarify value copy, example state, or default template | More repeat engagement from the target segment |

| Support-heavy workflow | Users who hit help docs, chat, or error states repeatedly | Multiple support or help visits around the same task | “What answer were you hoping to find?” | Review support context with the user or support owner | Improve inline guidance or change the task flow | Fewer repeat help visits for the same task |

| Pricing or signup hesitation | Visitors who reach pricing or signup but stop before completion | Pricing checks, form abandonment, scheduler abandonment | “What made the next step feel uncertain?” | Ask what risk they needed resolved before continuing | Clarify plan fit, expectation, or field requirement | More completed qualified next steps, not only more clicks |

The worksheet is useful because it forces feedback to connect to a decision. It does not ask the team to collect every possible signal. It asks the team to choose the smallest evidence set that can move one decision forward.

Feedback methods: what each one can and cannot answer

Different feedback methods answer different questions. Combining them is useful, but only if each method keeps its job.

| Method | Best question | Useful output | Limitation |

|---|---|---|---|

| Behavior review | What did users do before the drop-off or hesitation? | Session patterns, skipped steps, repeated visits, failed routes | Shows behavior, not every reason behind it |

| Targeted survey | What reason can the user give at the exact friction point? | Short answers tied to page, event, segment, or moment | Needs careful targeting and wording |

| Follow-up interview | What context, expectation, or language explains the pattern? | Motivation, vocabulary, workflow context, buying or usage constraints | Slower and less scalable |

| Support notes | What confusion repeats in the current customer base? | Recurring tasks, missing explanations, high-friction questions | Can overrepresent frustrated users |

| Feedback board | What requests are users willing to submit or vote on? | Request volume, themes, roadmap input | Does not automatically prove priority or root cause |

| Product analytics | How many users reached, skipped, or completed the step? | Frequency, funnel position, segment size | Often does not explain why |

The workflow should not force all methods into every decision. For a small activation issue, behavior plus one survey prompt may be enough. For a roadmap-changing feature request, the team may need interviews, usage data, and support context before acting.

How Monolytics fits the workflow

Monolytics fits the part of the feedback system where behavior and context need to meet.

Use it when the team needs to:

- inspect sessions around onboarding, signup, pricing, or feature usage;

- compare repeated failed patterns instead of relying on one replay;

- ask targeted feedback at the point where behavior becomes ambiguous;

- turn a behavior pattern plus a survey answer into the next product decision.

The Monolytics product overview explains the broader records, research, and survey workflow. The AI session replay page is useful when the team needs to find UX issues and conversion blockers faster. If the next step is commercial evaluation, see Monolytics pricing.

For adjacent workflows, read session replay analysis workflow when the team needs to choose which recordings deserve review, how to analyze onboarding friction in B2B SaaS when the loop is focused on setup and first value, behavior analytics for product marketing teams when the feedback loop starts on public marketing pages, and turn feedback into conversion experiments when the feedback cluster needs to become an experiment brief.

Common mistakes to avoid

Treating feedback as roadmap voting

Votes and requests are useful inputs, but they are not the decision by themselves. A requested feature may point to a real job, a misunderstood workflow, missing copy, a support gap, or a niche customer need. Check the behavior and context before turning requests into roadmap work.

Collecting feedback without a decision risk

If the team cannot name the decision, the feedback will be hard to interpret. Start with the risk: onboarding, activation, feature value, support load, signup hesitation, or pricing uncertainty.

Asking broad questions at specific moments

Generic satisfaction questions are usually weak for product diagnosis. A user stuck on setup does not need to answer a brand survey. They need a question about the current blocker.

Over-instrumenting too early

Early-stage products can create too many dashboards before they have enough traffic or enough clarity. Instrument the critical path, then add questions around the places where the team must make a decision.

Ignoring behavior after a strong quote

A good quote can be persuasive, but it should not end the investigation. Check whether the behavior pattern appears in other sessions and whether the user segment matches the decision.

Claiming product-market fit from feedback

Feedback can reduce uncertainty. It can show repeated friction, language, and motivation. It cannot prove product-market fit by itself. Treat it as evidence for the next decision, not as a final verdict.

FAQ

What is a user feedback workflow for startups?

It is a repeatable process for choosing one product decision, reviewing behavior evidence, asking the right contextual question, following up with relevant users, and turning the result into a small product or messaging action.

How often should an early-stage startup collect user feedback?

Collect feedback continuously only where it is tied to a decision. A weekly review loop is often more useful than a large quarterly feedback project because it keeps evidence close to shipping decisions.

How do you combine user feedback with product analytics?

Use analytics to find where a pattern is happening and how often. Use behavior review to see what users did in that step. Use targeted feedback or interviews to understand why the pattern may be happening.

When should a startup use surveys instead of interviews?

Use a short survey when the question is narrow and tied to a moment, such as a failed setup step or feature hesitation. Use interviews when the team needs context, motivation, language, or deeper explanation.

What feedback should a startup ignore?

Ignore or park feedback that is not tied to the target segment, not connected to a current decision, contradicted by behavior evidence, or too broad to act on. It may still be useful later, but it should not drive the current loop.

How do startups avoid over-instrumenting feedback?

Start with the critical path and the current decision risk. Add behavior checks, prompts, and events only where the team needs evidence to decide what to do next.

How can startups close the feedback loop with users?

Acknowledge useful feedback, explain what changed when appropriate, and follow up with users whose input shaped the decision. Closing the loop does not always mean building the requested feature; it means showing that the team listened and acted thoughtfully.

Final takeaway

The best startup feedback workflow is not the one that collects the most comments. It is the one that regularly helps the team choose the next useful change.

Pick one decision risk. Review what users did. Ask one contextual question. Talk to the users who can explain the pattern. Ship one small change. Check the follow-up signal. Then run the loop again.

Sources used

- Google Search Central: creating helpful, reliable, people-first content

- Nielsen Norman Group: Lean UX and Agile study guide

- Nielsen Norman Group: UX without user research is not UX

- Nielsen Norman Group: when to use which UX research methods

- Unveiling the Life Cycle of User Feedback: Best Practices from Software Practitioners

- M Accelerator: how to build a user feedback loop for startups

- UserTesting: the startup’s guide to user feedback

- ProdPad: early-stage feedback

- Founder Institute: getting user feedback before launching your product

- Great Question: starting a UX research practice from scratch

- Beamer: customer feedback management for startups